一、什么是AIUI

AIUI 是一套人机交互解决方案,整合了语音唤醒、语音识别、语义理解、内容平台、语音合成(比普通的语音合成多一个发音人)等能力。

新用户有20个免费的装机量,每天有500交互次数

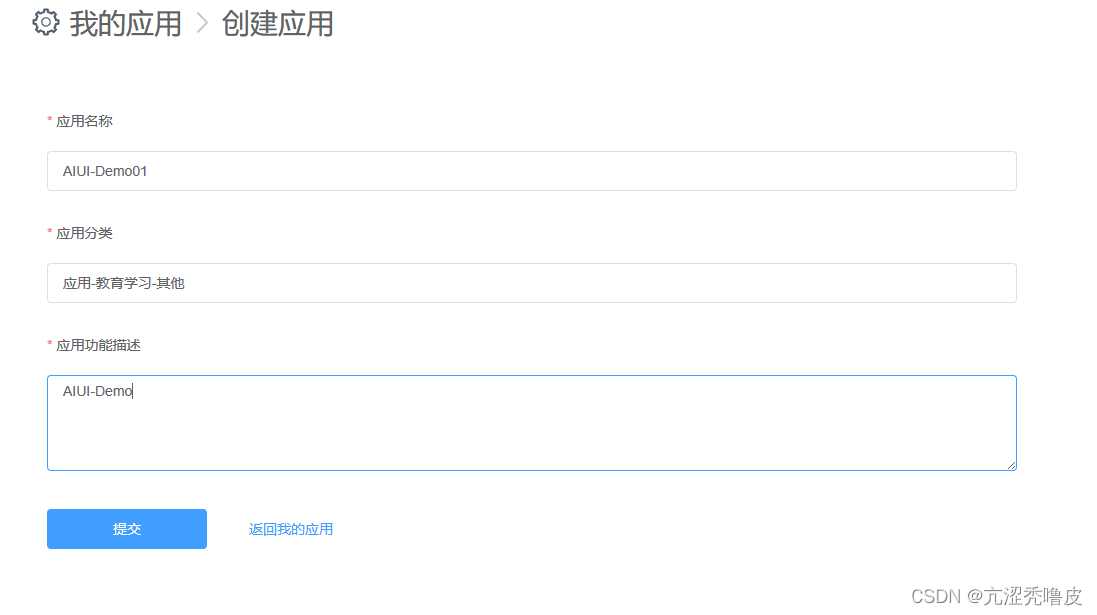

二、创建AIUI

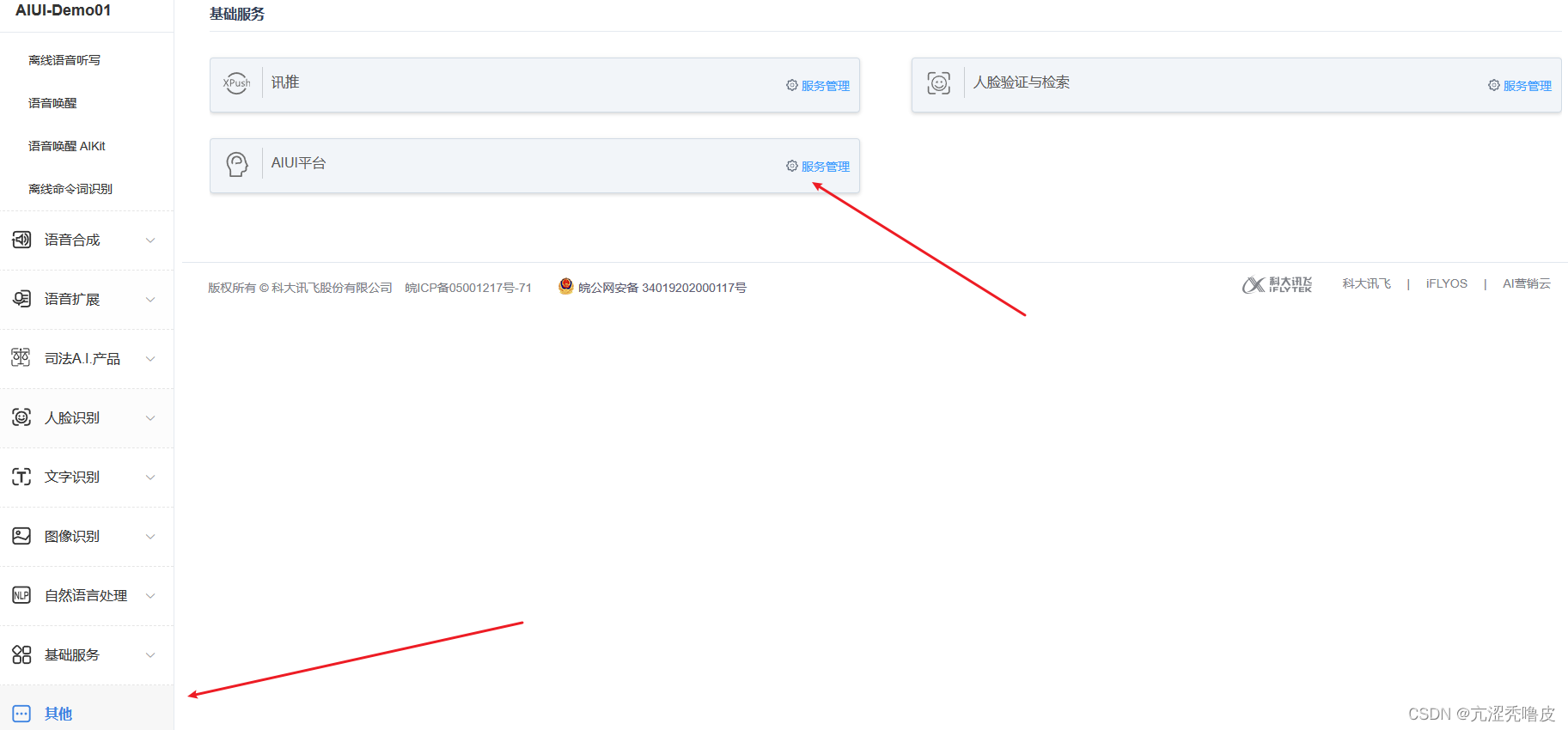

点击服务管理进入AIUI的控制台

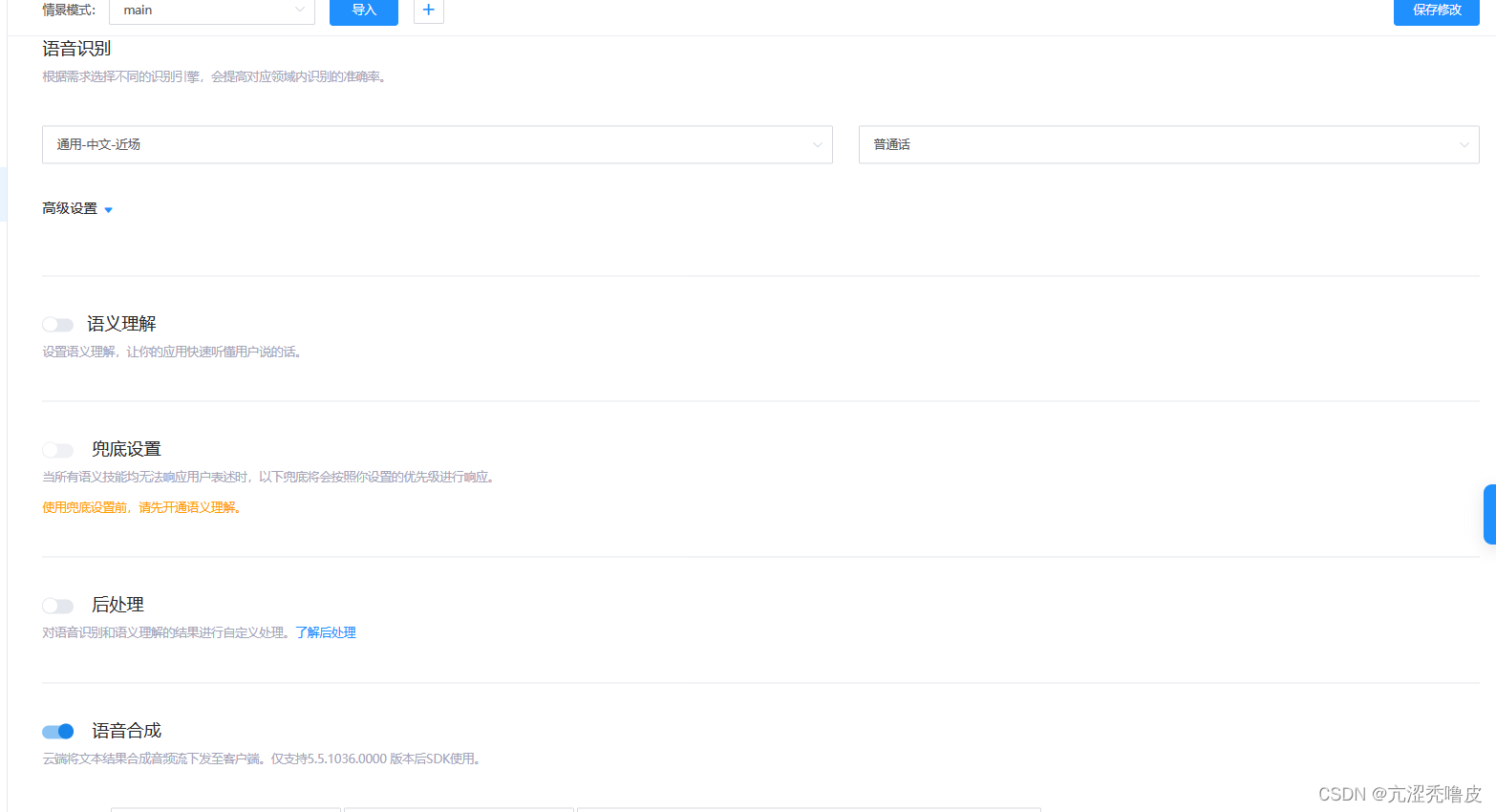

四、AIUI设置

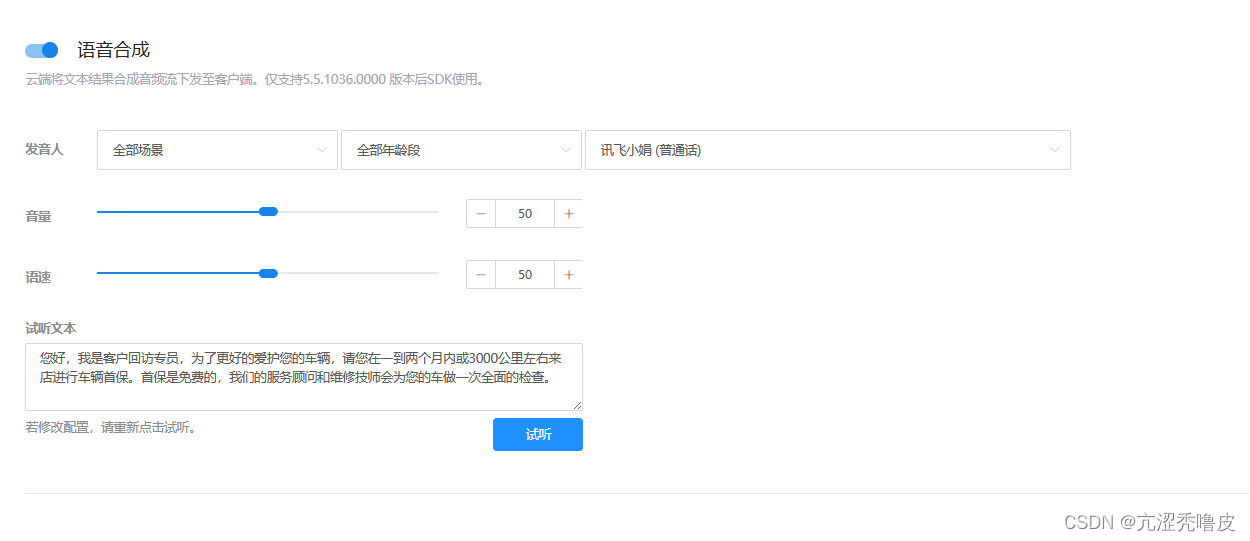

默认自带有语音识别的,如果需要AIUI回答你,则需要开启语义理解,如果需要有声音则需要开启语音合成,否则调用SDK的时候是不会返回语义理解和语音合成的数据的

可以在上面的位置直接修改发音人,返回的语音合成数据也会改变

五、导入项目

1.下载SDK

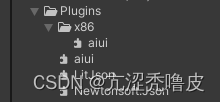

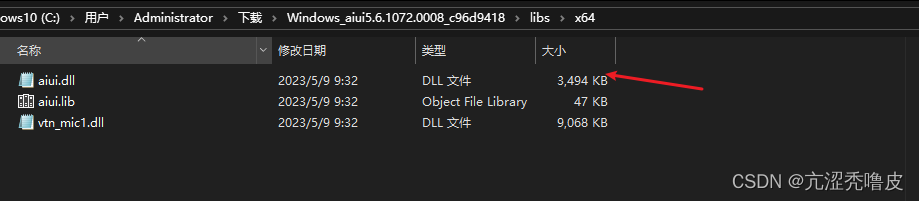

2.我们需要将aiui.dll导入的Unity的Plugins文件夹中

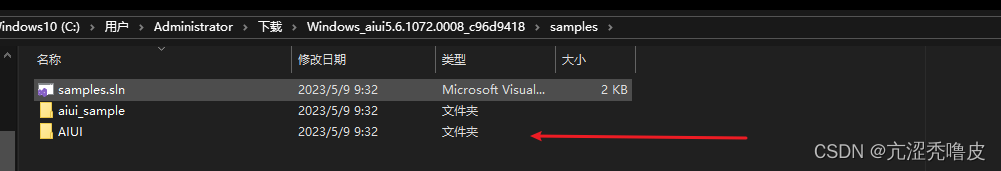

3.我们需要将AIUI文件夹放到StreamingAssets文件夹中,这里面保存的一些配置信息,比如APPID,语音合成等等,只需要在程序开始时读取配置文件进行了,就不需要像讯飞的语音合成,语音识别等模块一样需要登录和登出了。

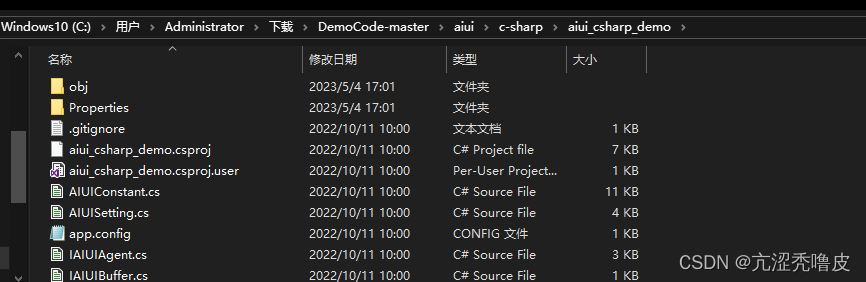

4.这里有一些工具类,是对 aiui.dll 的扩展,使用的是官方的 c# 的 demo

5.初始化配置

void Start()

{

// 为每一个设备设置对应唯一的SN(最好使用设备硬件信息(mac地址,设备序列号等)生成)

// 以便正确统计装机量,避免刷机或者应用卸载重装导致装机量重复计数

AIUISetting.setSystemInfo(AIUIConstant.KEY_SERIAL_NUM, GetMac());

string cfg = File.ReadAllText(Application.streamingAssetsPath + "\\AIUI\\cfg\\aiui.cfg");

agent = IAIUIAgent.Create(cfg, onEvent);

IAIUIMessage msg_start = IAIUIMessage.Create(AIUIConstant.CMD_START, 0, 0, "", IBuffer.Zero);

agent.SendMessage(msg_start);

msg_start.Destroy();

msg_start = null;

}

6.发送消息,这里是将麦克风录入的信息转换为byte[],然后发送的到SDK中

public IEnumerator SendMassage(byte[] data)

{

//唤醒

IAIUIMessage msg_wakeup = IAIUIMessage.Create(AIUIConstant.CMD_WAKEUP, 0, 0, "", IBuffer.Zero);

agent.SendMessage(msg_wakeup);

msg_wakeup.Destroy();

msg_wakeup = null;

yield return new WaitForSeconds(0.2f);

print("wakeup");

//开始录音

IAIUIMessage msg_start_r = IAIUIMessage.Create(AIUIConstant.CMD_START_RECORD, 0, 0,

"data_type=audio,interact_mode=oneshot", IBuffer.Zero);

agent.SendMessage(msg_start_r);

msg_start_r.Destroy();

msg_start_r = null;

//发送语音

IBuffer buf_1 = IBuffer.FromData(data, data.Length);

IAIUIMessage msg_write_audio = IAIUIMessage.Create(AIUIConstant.CMD_WRITE, 0, 0, "data_type=audio", buf_1);

agent.SendMessage(msg_write_audio);

msg_write_audio.Destroy();

msg_write_audio = null;

buf_1 = null;

yield return new WaitForSeconds(0.04f);

}

7.返回消息

private void onEvent(IAIUIEvent ev)

{

switch (ev.GetEventType())

{

case AIUIConstant.EVENT_STATE:

{

switch (ev.GetArg1())

{

case AIUIConstant.STATE_IDLE:

{

print("EVENT_STATE: IDLE");

}

break;

case AIUIConstant.STATE_READY:

{

print("EVENT_STATE: READY");

}

break;

case AIUIConstant.STATE_WORKING:

{

print("EVENT_STATE: WORKING");

}

break;

}

}

break;

case AIUIConstant.EVENT_WAKEUP:

{

Debug.LogFormat("EVENT_WAKEUP: {0}", ev.GetInfo());

}

break;

case AIUIConstant.EVENT_SLEEP:

{

Debug.LogFormat("EVENT_WAKEUP: arg1={0}", ev.GetArg1());

}

break;

case AIUIConstant.EVENT_VAD:

{

switch (ev.GetArg1())

{

case AIUIConstant.VAD_BOS:

{

print("EVENT_VAD: BOS");

}

break;

case AIUIConstant.VAD_EOS:

{

print("EVENT_VAD: EOS");

}

break;

}

}

break;

case AIUIConstant.EVENT_RESULT://返回成功时传入的数据

{

try

{

var info = JsonConvert.DeserializeObject(ev.GetInfo());

vAR Datas = info["data"] as JArray;

var data = datas[0] as JObject;

var param = data["params"] as JObject;

var contents = data["content"] as JArray;

var content = contents[0] as JObject;

string sub = param["sub"].ToString();

string cnt_id = content["cnt_id"].ToString();

int dataLen = 0;

byte[] buffer = ev.GetData().GetBinary(cnt_id, ref dataLen);

Debug.LogFormat("sub: {0} ,info: {1}", sub, ev.GetInfo());

switch (sub)

{

case "iat":

string jsonObject = Encoding.UTF8.GetString(buffer).Replace('\0', ' ');

JsonData deJson = JsonMapper.ToObject(jsonObject);

StringBuilder cont = new StringBuilder();

foreach (JsonData item in deJson["text"]["ws"])

{

cont.Append(item["cw"][0]["w"]);

}

//切换的主线程

Loom.QueueOnMainThread(() => {

sendText.text = cont.ToString();

});

break;

case "nlp":

string jsonObject1 = Encoding.UTF8.GetString(buffer).Replace('\0',' ');

JsonData deJson1 = JsonMapper.ToObject(jsonObject1);

//切换的主线程

Loom.QueueOnMainThread(() => {

returnText.text = deJson1["intent"]["answer"]["text"].ToString();

});

break;

case "tts":

string jsonObject2 = ev.GetInfo().Replace('\0', ' ');

JsonData deJson2 = JsonMapper.ToObject(jsonObject2);

//复制数据

for (int i = 0; i {

Save();

//audioData = new List();

//语音合成

//audioSource.clip = Clip(audioData.ToArray());

//audioSource.loop = false;

//audioSource.Play();

});

}

break;

case "asr":

break;

default:

break;

}

datas = null;

data = null;

param = null;

contents = null;

content = null;

info = null;

}

catch (Exception e)

{

print(e.Message); ;

}

}

break;

case AIUIConstant.EVENT_ERROR:

Debug.LogFormat("EVENT_ERROR: {0} {1}", ev.GetArg1(), ev.GetInfo());

break;

}

}

AIUIManager 全部代码

using aiui;

using LitJson;

using Newtonsoft.Json;

using Newtonsoft.Json.Linq;

using System;

using System.Collections;

using System.Collections.Generic;

using System.IO;

using System.Net.NetworkInformation;

using System.Runtime.InteropServices;

using System.Security.Cryptography;

using System.Text;

using UnityEngine;

using UnityEngine.UI;

//讯飞AIUI

public class AIUIManager : MonoBehaviour

{

public static AIUIManager Instance;

private IAIUIAgent agent;

public AudioSource audioSource;

public Text returnText;

public Text sendText;

private void Awake()

{

Instance = this;

}

// Start is called before the first frame update

void Start()

{

// 为每一个设备设置对应唯一的SN(最好使用设备硬件信息(mac地址,设备序列号等)生成)

// 以便正确统计装机量,避免刷机或者应用卸载重装导致装机量重复计数

AIUISetting.setSystemInfo(AIUIConstant.KEY_SERIAL_NUM, GetMac());

string cfg = File.ReadAllText(Application.streamingAssetsPath + "\\AIUI\\cfg\\aiui.cfg");

agent = IAIUIAgent.Create(cfg, onEvent);

IAIUIMessage msg_start = IAIUIMessage.Create(AIUIConstant.CMD_START, 0, 0, "", IBuffer.Zero);

agent.SendMessage(msg_start);

msg_start.Destroy();

msg_start = null;

}

public IEnumerator SendMassage(byte[] data)

{

//唤醒

IAIUIMessage msg_wakeup = IAIUIMessage.Create(AIUIConstant.CMD_WAKEUP, 0, 0, "", IBuffer.Zero);

agent.SendMessage(msg_wakeup);

msg_wakeup.Destroy();

msg_wakeup = null;

yield return new WaitForSeconds(0.2f);

print("wakeup");

//开始录音

IAIUIMessage msg_start_r = IAIUIMessage.Create(AIUIConstant.CMD_START_RECORD, 0, 0,

"data_type=audio,interact_mode=oneshot", IBuffer.Zero);

agent.SendMessage(msg_start_r);

msg_start_r.Destroy();

msg_start_r = null;

//发送语音

IBuffer buf_1 = IBuffer.FromData(data, data.Length);

IAIUIMessage msg_write_audio = IAIUIMessage.Create(AIUIConstant.CMD_WRITE, 0, 0, "data_type=audio", buf_1);

agent.SendMessage(msg_write_audio);

msg_write_audio.Destroy();

msg_write_audio = null;

buf_1 = null;

yield return new WaitForSeconds(0.04f);

}

public string GetMac()

{

NetworkInterface[] interfaces = NetworkInterface.GetAllNetworkInterfaces();

string mac = "";

foreach (NetworkInterface ni in interfaces)

{

if (ni.NetworkInterfaceType != NetworkInterfaceType.Loopback)

{

mac += ni.GetPhysicalAddress().ToString();

}

}

byte[] result = Encoding.Default.GetBytes(mac);

result = new MD5CryptoServiceProvider().ComputeHash(result);

StringBuilder builder = new StringBuilder();

for (int i = 0; i {

sendText.text = cont.ToString();

});

break;

case "nlp":

string jsonObject1 = Encoding.UTF8.GetString(buffer).Replace('\0',' ');

JsonData deJson1 = JsonMapper.ToObject(jsonObject1);

//切换的主线程

Loom.QueueOnMainThread(() => {

returnText.text = deJson1["intent"]["answer"]["text"].ToString();

});

break;

case "tts":

string jsonObject2 = ev.GetInfo().Replace('\0', ' ');

JsonData deJson2 = JsonMapper.ToObject(jsonObject2);

if (int.Parse(deJson2["data"][0]["content"][0]["dts"].ToString()) == 2)

{

for (int i = 0; i {

//Save();

//语音合成

audioSource.clip = Clip(audioData.ToArray());

//audioSource.loop = false;

audioSource.Play();

audioData = new List();

});

}

break;

case "asr":

break;

default:

break;

}

datas = null;

data = null;

param = null;

contents = null;

content = null;

info = null;

}

catch (Exception e)

{

print(e.Message); ;

}

}

break;

case AIUIConstant.EVENT_ERROR:

Debug.LogFormat("EVENT_ERROR: {0} {1}", ev.GetArg1(), ev.GetInfo());

break;

}

}

private List audioData = new List();

///

/// 把结构体转化为字节序列

///

/// 被转化的结构体

/// 返回字节序列

public static byte[] StructToBytes(object structure)

{

int size = Marshal.SizeOf(structure);

IntPtr buffer = Marshal.AllocHGlobal(size);

try

{

Marshal.StructureToPtr(structure, buffer, false);

Byte[] bytes = new Byte[size];

Marshal.Copy(buffer, bytes, 0, size);

return bytes;

}

finally

{

Marshal.FreeHGlobal(buffer);

}

}

///

/// 根据数据段的长度,生产文件头

///

/// 音频数据长度

/// 返回wav文件头结构体

public static WAVE_Header getWave_Header(int data_len)

{

WAVE_Header wav_Header = new WAVE_Header();

wav_Header.RIFF_ID = 0x46464952; //字符RIFF

wav_Header.File_Size = data_len + 36;

wav_Header.RIFF_Type = 0x45564157; //字符WAVE

wav_Header.FMT_ID = 0x20746D66; //字符fmt

wav_Header.FMT_Size = 16;

wav_Header.FMT_Tag = 0x0001;

wav_Header.FMT_Channel = 1; //单声道

wav_Header.FMT_SamplesPerSec = 16000; //采样频率

wav_Header.AvgBytesPerSec = 32000; //每秒所需字节数

wav_Header.BlockAlign = 2; //每个采样1个字节

wav_Header.BitsPerSample = 16; //每个采样8bit

wav_Header.DATA_ID = 0x61746164; //字符data

wav_Header.DATA_Size = data_len;

return wav_Header;

}

///

/// wave文件头

///

public struct WAVE_Header

{

public int RIFF_ID; //4 byte , 'RIFF'

public int File_Size; //4 byte , 文件长度

public int RIFF_Type; //4 byte , 'WAVE'

public int FMT_ID; //4 byte , 'fmt'

public int FMT_Size; //4 byte , 数值为16或18,18则最后又附加信息

public short FMT_Tag; //2 byte , 编码方式,一般为0x0001

public ushort FMT_Channel; //2 byte , 声道数目,1--单声道;2--双声道

public int FMT_SamplesPerSec; //4 byte , 采样频率

public int AvgBytesPerSec; //4 byte , 每秒所需字节数,记录每秒的数据量

public ushort BlockAlign; //2 byte , 数据块对齐单位(每个采样需要的字节数)

public ushort BitsPerSample; //2 byte , 每个采样需要的bit数

public int DATA_ID; //4 byte , 'data'

public int DATA_Size; //4 byte ,

}

#region 播放录音

public static AudioClip Clip(byte[] fileBytes, int offsetSamples = 0, string name = "ifly")

{

//string riff = Encoding.ASCII.GetString (fileBytes, 0, 4);

//string wave = Encoding.ASCII.GetString (fileBytes, 8, 4);

int subchunk1 = BitConverter.ToInt32(fileBytes, 16);

ushort audioFormat = BitConverter.ToUInt16(fileBytes, 20);

// NB: Only uncompressed PCM wav files are supported.

string formatCode = FormatCode(audioFormat);

//Debug.AssertFormat(audioFormat == 1 || audioFormat == 65534, "Detected format code '{0}' {1}, but only PCM and WaveFormatExtensable uncompressed formats are currently supported.", audioFormat, formatCode);

ushort channels = BitConverter.ToUInt16(fileBytes, 22);

int sampleRate = BitConverter.ToInt32(fileBytes, 24);

//int byteRate = BitConverter.ToInt32 (fileBytes, 28);

//UInt16 blockAlign = BitConverter.ToUInt16 (fileBytes, 32);

ushort bitDepth = BitConverter.ToUInt16(fileBytes, 34);

int headerOffset = 16 + 4 + subchunk1 + 4;

int subchunk2 = BitConverter.ToInt32(fileBytes, headerOffset);

//Debug.LogFormat ("riff={0} wave={1} subchunk1={2} format={3} channels={4} sampleRate={5} byteRate={6} blockAlign={7} bitDepth={8} headerOffset={9} subchunk2={10} filesize={11}", riff, wave, subchunk1, formatCode, channels, sampleRate, byteRate, blockAlign, bitDepth, headerOffset, subchunk2, fileBytes.Length);

//Log.Info(bitDepth);

float[] data;

switch (bitDepth)

{

case 8:

data = Convert8BitByteArrayToAudioClipData(fileBytes, headerOffset, subchunk2);

break;

case 16:

data = Convert16BitByteArrayToAudioClipData(fileBytes, headerOffset, subchunk2);

break;

case 24:

data = Convert24BitByteArrayToAudioClipData(fileBytes, headerOffset, subchunk2);

break;

case 32:

data = Convert32BitByteArrayToAudioClipData(fileBytes, headerOffset, subchunk2);

break;

default:

throw new Exception(bitDepth + " bit depth is not supported.");

}

AudioClip audioClip = AudioClip.Create(name, data.Length, channels, sampleRate, false);

audioClip.SetData(data, 0);

return audioClip;

}

private static string FormatCode(UInt16 code)

{

switch (code)

{

case 1:

return "PCM";

case 2:

return "ADPCM";

case 3:

return "IEEE";

case 7:

return "μ-law";

case 65534:

return "WaveFormatExtensable";

default:

Debug.LogWarning("Unknown wav code format:" + code);

return "";

}

}

#region wav file bytes to Unity AudioClip conversion methods

private static float[] Convert8BitByteArrayToAudioClipData(byte[] source, int headerOffset, int dataSize)

{

int wavSize = BitConverter.ToInt32(source, headerOffset);

headerOffset += sizeof(int);

Debug.AssertFormat(wavSize > 0 && wavSize == dataSize, "Failed to get valid 8-bit wav size: {0} from data bytes: {1} at offset: {2}", wavSize, dataSize, headerOffset);

float[] data = new float[wavSize];

sbyte maxValue = sbyte.MaxValue;

int i = 0;

while (i 0 && wavSize == dataSize, "Failed to get valid 16-bit wav size: {0} from data bytes: {1} at offset: {2}", wavSize, dataSize, headerOffset);

int x = sizeof(Int16); // block size = 2

int convertedSize = wavSize / x;

float[] data = new float[convertedSize];

Int16 maxValue = Int16.MaxValue;

int offset = 0;

int i = 0;

while (i 0 && wavSize == dataSize, "Failed to get valid 24-bit wav size: {0} from data bytes: {1} at offset: {2}", wavSize, dataSize, headerOffset);

int x = 3; // block size = 3

int convertedSize = wavSize / x;

int maxValue = Int32.MaxValue;

float[] data = new float[convertedSize];

byte[] block = new byte[sizeof(int)]; // using a 4 byte block for copying 3 bytes, then copy bytes with 1 offset

int offset = 0;

int i = 0;

while (i 0 && wavSize == dataSize, "Failed to get valid 32-bit wav size: {0} from data bytes: {1} at offset: {2}", wavSize, dataSize, headerOffset);

int x = sizeof(float); // block size = 4

int convertedSize = wavSize / x;

Int32 maxValue = Int32.MaxValue;

float[] data = new float[convertedSize];

int offset = 0;

int i = 0;

while (i

这里接收到消息时,是使用的其他线程,所以需要转换到主线程才能使用Unity的API,不然就会报错,我这里可以将传入的数据放在本地,也在内存中使用并播放(这里传输过来的数据是pcm,如果要在Unity中直接使用,需要写入WAV头才行)。语音合成的数据是多段传输的,所以需要整合在一起。

六、项目位置

链接:https://pan.baidu.com/s/1X83tzFAaRLFvPeleT2Pm0A

提取码:a1oj

只需要将SDK中的AIUI文件放在SteamingAssets文件夹中和替换aiui.dll文件即可。